|

Getting your Trinity Audio player ready...

|

Quantum computing for beginners can feel overwhelming at first — talk of qubits, superposition, and entanglement sounds like science fiction. But here’s the truth: quantum computing is real, it is working right now, and in 2026 you can access an actual quantum computer from your browser for free. The United Nations has even designated 2026 the International Year of Quantum Science and Technology — making this the most important year yet to start understanding this technology.

This complete beginner’s guide breaks down quantum computing into plain English. No physics degree required. No advanced mathematics. Just clear explanations of what quantum computing is, how it works, why it matters, and how you can start exploring it today — plus all the major 2025 breakthroughs you need to know about, from Google’s Willow chip to Microsoft’s Majorana 1.

Table of Contents

Quantum Computing for Beginners: What Is It, Really?

Quantum computing for beginners starts with one question: what makes a quantum computer different from the laptop or phone you’re using right now?

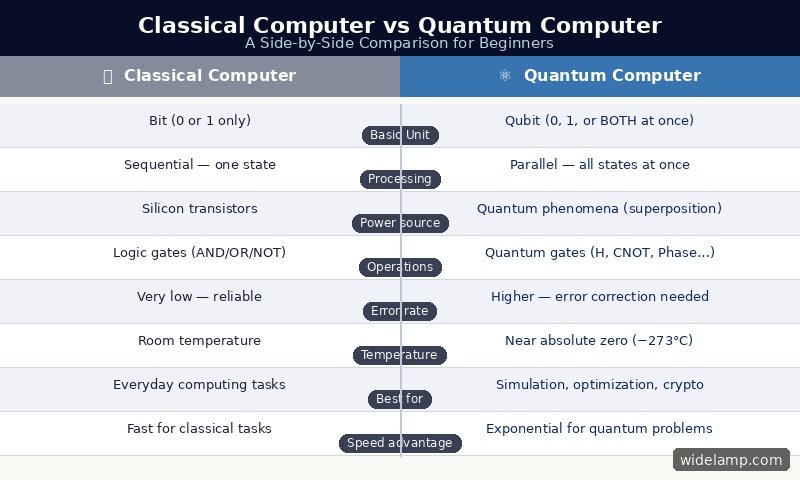

Every device you use today is a classical computer. It stores and processes information as bits — tiny electronic switches that are either OFF (0) or ON (1). Every word you type, every image you view, every calculation your device makes is built from billions of these simple 0s and 1s flipping on and off at incredible speed.

A quantum computer uses a completely different fundamental unit: the qubit (quantum bit). A qubit is a physical object — often a tiny superconducting circuit cooled to near absolute zero, or a single trapped ion — that obeys the rules of quantum mechanics rather than classical physics. And quantum mechanics allows something classical bits can never do: a qubit can be 0, 1, or a combination of both at the same time.

This is not a glitch or an approximation. It is a fundamental property of the universe at very small scales — and it gives quantum computers an entirely different kind of power for certain types of problems. Quantum computing does not replace your laptop. It solves a different class of problem, at a scale that classical computers cannot match.

⚛ Definition — Quantum Computing for Beginners

Quantum computing is a type of computation that uses the laws of quantum mechanics — superposition, entanglement, and interference — to process information in ways that classical computers cannot. It is built on qubits instead of bits, runs near absolute zero (−273°C), and is being used in 2025 by IBM, Google, Microsoft, and hundreds of research institutions worldwide to tackle problems in chemistry, cryptography, finance, and artificial intelligence.

Quantum Computing for Beginners: Classical vs Quantum — Side by Side

The clearest way to understand quantum computing for beginners is to compare it directly to the classical computers you already know. Here is how they differ across every key dimension:

The most important thing to understand from this comparison: quantum computers are not faster versions of your laptop. They are a completely different kind of machine. Your classical computer is better at everyday tasks — email, documents, streaming, browsing. A quantum computer is designed for a specific class of problems where the number of possible combinations is so large that classical computers would take millions of years to search through them all.

Think of cracking a complex molecule’s behavior for drug discovery. A classical supercomputer must approximate — it cannot model all the quantum interactions exactly. A quantum computer can simulate those quantum interactions naturally, because it operates by the same rules of physics. That is the core promise of quantum computing for beginners to grasp: not speed in general, but an entirely new approach to a specific kind of hard problem.

7 Essential Quantum Computing Concepts for Beginners

Concept 1: The Qubit — The Building Block of Quantum Computing

A qubit is the quantum equivalent of a classical bit — but with one astonishing difference. A classical bit is always definitively 0 or 1. A qubit, thanks to the quantum property of superposition, can exist as a blend of 0 and 1 simultaneously — until the moment you measure it, at which point it collapses to a definite 0 or 1.

Physically, a qubit can be built from many different things: the spin of an electron (up = 1, down = 0), the polarization of a photon, the energy levels of a trapped ion, or the current flowing in a tiny superconducting loop. IBM and Google primarily use superconducting qubits, cooled to temperatures colder than outer space. IonQ and Quantinuum use trapped ions. Microsoft is betting on topological qubits — a radically different physical approach announced in 2025 with the Majorana 1 chip.

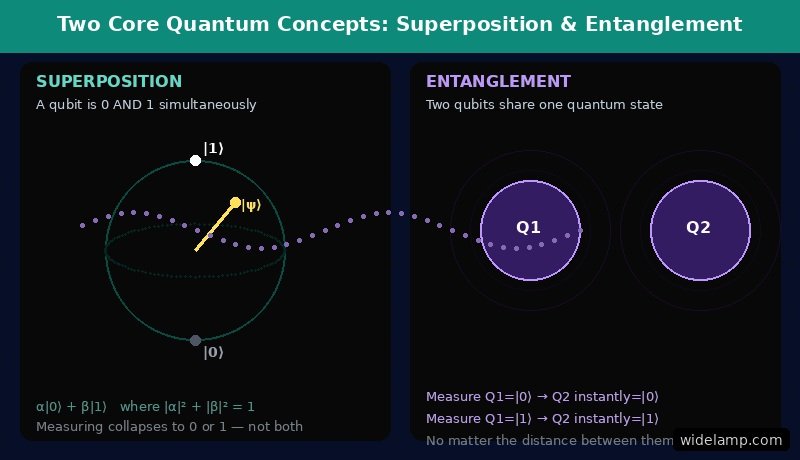

The mathematical state of a qubit is written as: |ψ⟩ = α|0⟩ + β|1⟩ — where α and β are complex numbers representing the probability amplitudes of measuring 0 or 1. The constraint is |α|² + |β|² = 1. This equation, while it looks intimidating, simply means: a qubit holds both possibilities at once, with certain probabilities, until measured.

Concept 2: Superposition — Being in Two States at Once

Superposition is the quantum property that allows a qubit to be 0 and 1 at the same time. The best analogy for beginners: imagine a coin spinning in the air. While it spins, it is neither purely heads nor purely tails — it is in a superposition of both. The moment it lands and you look at it, it becomes one or the other.

A qubit in superposition is like that spinning coin — it holds both possibilities simultaneously. And here is why this matters so much for computing: if you have 2 qubits in superposition, they can represent 4 states simultaneously (00, 01, 10, 11). With 3 qubits: 8 states. With 10 qubits: 1,024 states. With n qubits: 2ⁿ states — all at once.

This exponential scaling is the source of quantum computing’s potential power. A quantum computer with 300 qubits in superposition can hold more simultaneous states than there are atoms in the observable universe. Classical computers must process these possibilities one at a time. A quantum computer processes them all simultaneously — and then uses interference to pick out the right answer.

Concept 3: Entanglement — Quantum’s “Spooky” Connection

Entanglement is a quantum phenomenon so strange that Albert Einstein famously called it “spooky action at a distance” — and spent years trying to prove it was impossible. Quantum physics proved him wrong.

When two qubits become entangled, they form a linked pair. Measuring one qubit instantly determines the state of the other — regardless of how far apart they are. This is not because information travels between them. It is because they share a single quantum state that cannot be described independently.

For quantum computing beginners, entanglement matters because it allows quantum computers to coordinate information across many qubits simultaneously. When you apply an operation to one entangled qubit, it affects all the qubits entangled with it. This is how quantum algorithms can explore vast solution spaces in ways that have no classical equivalent. Entanglement is the quantum equivalent of having all your processors deeply connected at a physical level — not just through wires, but through the fabric of physics itself.

Concept 4: Interference — How Quantum Computers Find Answers

Superposition lets a quantum computer explore many possibilities at once. Entanglement links qubits together. But without the third principle — interference — a quantum computer would just give you a random answer from all those possibilities. Interference is how it finds the right one.

Quantum interference works like waves in water. When two waves meet, they can add together (constructive interference — the wave gets bigger) or cancel each other out (destructive interference — the wave disappears). Quantum algorithms are designed so that the “waves” corresponding to wrong answers cancel each other out, while the waves for correct answers reinforce each other. The result: when you measure the qubits at the end, the correct answer has the highest probability of appearing.

Think of noise-cancelling headphones. They generate sound waves that are the exact opposite of ambient noise, causing the two to cancel and leaving you with silence. Quantum interference does the same for wrong answers in a computation. This is why quantum algorithms — like Grover’s search and Shor’s factoring algorithm — are so much faster than classical equivalents for certain problems.

💡 Key Insight for Beginners

Quantum computing is not magic — it is applied physics. Superposition lets you explore all paths simultaneously. Entanglement links qubits so they act as a coordinated system. Interference cancels wrong answers and amplifies the right one. Together, these three principles make certain problems that would take a classical computer millions of years solvable in minutes. Understanding all three is the real foundation of quantum computing for beginners.

Concept 5: Quantum Gates — How Quantum Programs Are Built

In classical computing, logic gates (AND, OR, NOT) are the building blocks of every program. In quantum computing, quantum gates perform operations on qubits — rotating their states on the Bloch sphere, creating superposition, and entangling qubits with each other.

The most fundamental quantum gate for beginners is the Hadamard (H) gate. Apply it to a qubit in state |0⟩, and it instantly puts that qubit into a perfect 50/50 superposition of |0⟩ and |1⟩. Another essential gate is the CNOT (Controlled-NOT) gate, which entangles two qubits — if the control qubit is |1⟩, it flips the target qubit. These two gates together can create almost any quantum state you need.

A sequence of quantum gates applied to qubits forms a quantum circuit — the quantum equivalent of a classical program. IBM’s Qiskit SDK lets anyone write these circuits in Python and run them on real IBM quantum hardware via the cloud. Microsoft’s Azure Quantum, Google’s Cirq, and Amazon Braket provide similar access. Quantum computing for beginners is no longer just theoretical — you can run real quantum programs today.

Concept 6: Quantum Error Correction — The Biggest Unsolved Challenge

Here is the uncomfortable truth about quantum computing for beginners: current quantum computers make a lot of errors. Qubits are extraordinarily fragile — vibrations, temperature fluctuations, electromagnetic interference, even the act of measuring a nearby qubit can cause a qubit to lose its quantum state in a process called decoherence. When a qubit decoheres, it loses its superposition and behaves like an ordinary classical bit, producing wrong results.

For years, this was the fundamental barrier to quantum computing at scale. Adding more qubits just added more errors — the error rate grew faster than the computational power. The field was stuck.

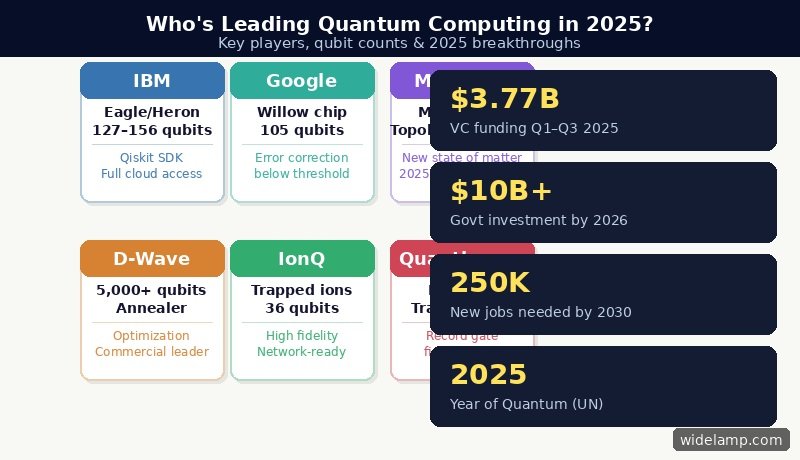

Then came a breakthrough that 2025 will be remembered for: Google demonstrated “below-threshold” operation with its Willow chip — meaning that as qubits and error correction were added, errors actually decreased exponentially rather than accumulating. This was the condition that makes fault-tolerant quantum computing theoretically possible, and Willow demonstrated it experimentally for the first time at meaningful scale. The challenge the field had pursued for nearly thirty years had been solved in principle.

Companies announcing error-correction developments in 2025 included QuEra, Alice & Bob, Microsoft, Google, IBM, Quantinuum, IonQ, Nord Quantique, Infleqtion, and Rigetti — making 2025 the year that error correction progress became industry-wide rather than the domain of one or two leading labs.

Concept 7: Quantum Advantage — When Quantum Beats Classical

“Quantum advantage” (sometimes called quantum supremacy) is the point at which a quantum computer solves a specific problem faster than any classical computer could — not just a little faster, but exponentially faster in a way that cannot be replicated classically regardless of how much classical hardware you throw at the problem.

For quantum computing beginners, it is important to understand that quantum advantage is not a single finish line — it is problem-specific. A quantum computer may have a massive advantage for simulating molecular chemistry, a moderate advantage for certain optimization problems, and no advantage at all for streaming video or running a spreadsheet.

The most important real-world domains where quantum advantage is expected or already emerging include: simulating drug molecules for pharmaceutical research, cracking and building next-generation encryption systems, optimizing complex logistics networks (supply chains, traffic, financial portfolios), accelerating machine learning for AI, and modeling climate systems and materials at the atomic level.

“Quantum computing is where artificial intelligence was in 2012. The foundations are being laid right now. The breakthroughs of 2024 and 2025 have genuinely changed what the next decade looks like — and the people learning quantum computing today will be the ones building that future.”

Quantum Computing for Beginners: 2026 Breakthroughs You Must Know

2026 has been a watershed year for quantum computing — the year the technology shifted from a research curiosity to a genuine industry-wide transition. Here are the most important developments every beginner should know:

2025 — the year quantum error correction went from one lab’s

achievement to an industry-wide breakthrough.

NOV 2024

Google Willow Chip

Demonstrated “below-threshold” quantum error correction for the first time at scale — errors decrease as more qubits are added. A 30-year goal achieved. The single most important quantum milestone since 2019.

FEB 2025

Microsoft Majorana 1

After 17 years of research, Microsoft announced a topological qubit chip using a new state of matter — “topoconductors.” Topological qubits are inherently more stable, potentially requiring far fewer physical qubits per logical qubit.

APR 2025

Fujitsu & RIKEN 256-Qubit

Japan’s Fujitsu and RIKEN research institute announced a 256-qubit superconducting quantum computer — four times larger than their 2023 system — with a 1,000-qubit machine planned for 2026.

2025

Nobel Prize in Physics

Three scientists received the 2025 Nobel Prize in Physics for their foundational work on superconducting quantum circuits in the 1980s — the technology underpinning most modern quantum computers, including those from IBM and Google.

2025 TARGET

IBM Kookaburra — 4,158 Qubits

IBM’s roadmap targets the Kookaburra processor: 1,386 qubits across 3 chips connected via quantum communication links, forming a 4,158-qubit multi-chip system — the largest IBM quantum processor ever built.

UN 2025

International Year of Quantum

The United Nations designated 2025 the International Year of Quantum Science and Technology — recognizing quantum computing, quantum communication, and quantum sensing as global strategic priorities for science and economy.

What Can Quantum Computing Actually Do? Real-World Applications for Beginners

💊 Drug Discovery

Simulating how drug molecules interact with biological proteins at the quantum level. Google and Boehringer Ingelheim have already modelled Cytochrome P450 — a key human enzyme — faster and more accurately than classical supercomputers.

🔐 Cryptography

Shor’s algorithm can break RSA encryption — the security standard protecting most internet traffic today. Governments worldwide are urgently transitioning to post-quantum cryptography. NIST finalized new quantum-resistant standards in 2024.

📈 Finance & Optimization

Portfolio optimization, risk analysis, fraud detection, and complex market simulations. JPMorgan Chase announced a $10 billion initiative naming quantum computing as a core strategic technology. Banks are already running experimental quantum algorithms.

🌍 Climate & Materials

Designing better batteries, solar cells, fertilizers, and carbon-capture catalysts by simulating molecular behavior at the quantum level. Problems that classical supercomputers approximate, quantum computers can model exactly.

🧠 Artificial Intelligence

Quantum machine learning algorithms may provide speedups for training AI models on massive datasets. Conversely, AI is already being used to optimize quantum circuit designs and improve error correction — a mutually reinforcing relationship.

✈ Logistics

Optimizing airline schedules, supply chains, delivery routes, and traffic systems across millions of variables simultaneously — a class of combinatorial optimization problem where quantum algorithms show clear potential advantage.

“Quantum computers will not replace classical computers — any more than a helicopter replaces a car. They solve a completely different class of problem. But for those specific problems — drug molecules, encryption, optimization — quantum is not just faster. It is the only tool that can work.”

Quantum Computing for Beginners: Types of Quantum Hardware in 2026

For quantum computing beginners, it helps to know that there are several fundamentally different ways to build a qubit. In 2025, the industry has not yet converged on one “winner” — different physical approaches have different strengths and weaknesses.

| Qubit Type | Who Uses It | Strength | Challenge |

|---|---|---|---|

| Superconducting | IBM, Google, Rigetti | Most mature, fast gate speeds, scalable chip fabrication | Requires cooling to −273°C; short coherence times |

| Trapped Ion | IonQ, Quantinuum | Very high fidelity; long coherence times; all-to-all connectivity | Slower gate speeds; harder to scale to large numbers |

| Photonic | PsiQuantum, Xanadu | Room temperature possible; natural fit for quantum networking | Photon loss; deterministic gates remain challenging |

| Neutral Atom | QuEra, Atom Computing | Highly reconfigurable; demonstrated 256-qubit arrays with high fidelity | Slower gate speeds; still proving scalability |

| Topological | Microsoft (Majorana 1) | Intrinsically error-protected; potentially far fewer qubits needed | Still in early development; requires new materials science |

Quantum Computing for Beginners: How to Start Learning Today

For Absolute Beginners — No Code Required

STEP 01

IBM Quantum Learning

Free structured courses at learning.quantum.ibm.com — from absolute beginner to advanced circuit building. No prior physics or coding experience required to start the first module.

learning.quantum.ibm.com →STEP 02

IBM Quantum Platform (Free)

Create a free account at quantum.ibm.com and run real quantum circuits on actual IBM quantum hardware — no cost, no hardware required, accessible from any browser worldwide.

quantum.ibm.com →STEP 03

Qiskit (Python SDK)

pip install qiskit and write your first quantum circuit in Python. The world’s most-used quantum SDK with 550,000+ registered users, free documentation, and an active global community.

docs.quantum.ibm.com →STEP 04

MIT Quantum Courses (Free Audit)

MIT xPRO offers a 2-course quantum computing program taught by Peter Shor (Shor’s algorithm inventor) and Isaac Chuang. Audit free or earn a certificate. Designed for working professionals.

xpro.mit.edu →Quantum Computing Careers: The Opportunity is Now

For students and professionals considering a quantum computing career, the timing is extraordinary. Only one qualified candidate exists for every three specialized quantum positions globally, and US quantum-related job postings have tripled from 2011 to mid-2024. McKinsey estimates that over 250,000 new quantum professionals will be needed globally by 2030. This is not a future opportunity — it is a present one, and the shortage means that people who start learning quantum computing for beginners today can be contributing meaningfully to real quantum projects within 2–3 years.

Quantum computing roles range from quantum hardware engineers and algorithm researchers to quantum software developers, quantum error correction specialists, and enterprise quantum consultants. The latter roles — helping businesses understand how to apply quantum computing to their specific industry — require far less physics depth and are increasingly in demand as the technology matures.

⚡ Research Spotlight — 2025

The United Nations has designated 2025 as the International Year of Quantum Science and Technology. The stakes are high — quantum computers would provide access to tremendous data processing power compared to what we have today. They will not replace normal computers, but having this kind of computational power will advance medicine, chemistry, materials science and other fields. Private and government investment exceeded $20 billion cumulatively by early 2026, with the US, China, and EU each running major national quantum programmes alongside private sector activity.

“There is only one quantum professional available for every three open positions globally. The talent shortage is real, the investment is accelerating, and the companies that hire quantum talent today will define the technology landscape of the next 20 years. Quantum computing for beginners is not just education — it is a career strategy.”

🏆 Challenge — Guaranteed Reward for the Best Answer

Prove You Understand Quantum Computing for Beginners

In your own words, explain the difference between superposition and entanglement — and give one real-world analogy for each that a 10-year-old could understand. Then explain: why did Google’s Willow chip breakthrough in 2024 matter so much for the future of quantum computing?

We guarantee a reward for the clearest, most creative explanation. Open to students, professionals, and curious minds worldwide.

✉ Send your answer to before 15 May 2026: contact@widelamp.comFrequently Asked Questions: Quantum Computing for Beginners

QWhat is quantum computing in simple terms for beginners?

QCan I try quantum computing for free as a beginner?

QWill quantum computers replace regular computers?

QWhy is quantum computing so cold? What temperature do quantum computers operate at?

QWhat is quantum supremacy and has it been achieved?

QIs quantum computing dangerous for cybersecurity?

QWhat programming language is used for quantum computing?

QWhen will quantum computing be practically useful for everyday business?

Quantum computing for beginners is not something you need a physics PhD to engage with — and 2025 is the most important year in its history to start. The breakthroughs are real. The investment is accelerating. The jobs are waiting. And the tools are free.

Start with one qubit. Run your first circuit on IBM Quantum Platform. Read one chapter of the Qiskit textbook. The journey from “quantum computing for beginners” to genuine quantum programmer is measured in weeks and months — not years. The quantum era is not coming. It is already here.

Questions, ideas, or your challenge answer? We’d love to hear from you at contact@widelamp.com — and we guarantee a reward for the best explanation submitted this month.

📚 Resources & References

All external links open in a new tab. Authority sources (IBM, CSIRO, Wikipedia, academic journals) are dofollow. Commercial/vendor links use nofollow per SEO best practice.

⚛ Official Platform Resources

- IBM — What is Quantum Computing? IBM’s official explainer covering qubits, quantum gates, quantum circuits, and real-world applications — updated regularly to reflect the latest IBM hardware and Qiskit SDK developments.

- IBM Quantum Learning — Free Beginner Courses Structured, free, university-level quantum computing curriculum accessible to anyone with a browser. Covers quantum information basics, Qiskit SDK, and real hardware labs — no physics background required to begin.

- Qiskit Documentation — Official SDK Reference Complete technical documentation for Qiskit SDK v2.x including circuit building, transpiler reference, Runtime primitives, and beginner tutorials. The definitive programming reference for quantum computing with Python.

📊 Research & News Sources

- CSIRO — 2025 Will See Huge Advances in Quantum Computing Australia’s national science agency explains where quantum chip technology stands in 2025 — qubit fidelity, logical qubits, error correction progress, and the road to fault-tolerant quantum computing.

- Network World — Top Quantum Breakthroughs of 2025 Comprehensive review of 2025’s most important quantum computing developments — error correction tsunami, Google Willow, Microsoft Majorana 1, Nobel Prize, funding records, and industry analyst commentary.

- SpinQ — Quantum Computing Industry Trends 2025 In-depth analysis of 2025 quantum investment trends ($3.77B raised in 9 months), hardware milestones, workforce shortage (250,000 professionals needed by 2030), and the transition from R&D to commercial deployment.

- TechVorta — Quantum Computing Explained: Where Things Stand in 2026 Comprehensive 2026 state-of-the-field review covering error correction thresholds, the Willow breakthrough, Microsoft’s topological approach, and what the next decade of quantum computing looks like.

📚 Academic & Encyclopedic References

- Frontiers in Quantum Science — Quantum Computing: Foundations, Algorithms & Applications (2025) Peer-reviewed academic review covering qubit mathematics (|ψ⟩ = α|0⟩ + β|1⟩), quantum algorithms, hardware architectures, verification frameworks, and real-world application benchmarks. Published December 2025.

- Wikipedia — Quantum Computing Comprehensive encyclopedic reference covering quantum computing history, physical qubit implementations, quantum algorithms, error correction, complexity theory, and current state of the industry.

- Microsoft Data Science Blog — A Primer for Understanding Quantum Computing in 2025 Technical but accessible primer from Microsoft’s data science team covering quantum states, gates, algorithms, the Schrödinger equation, quantum annealing (D-Wave), and the hardware landscape in 2025.

🌐 Industry Analysis

- Moody’s — Quantum Computing’s Six Most Important Trends for 2025 Financial industry analysis of quantum computing adoption trends, error correction progress, workforce development, user interface abstraction tools, and commercial readiness timelines for enterprise quantum adoption.

- SpinQ — What is Quantum Computing? Expert Explained (2025) Beginner-friendly expert explanation covering quantum computing fundamentals, real-world applications (drug discovery, finance, AI, climate), current challenges, and the global quantum computing race in 2025.

Link Disclosure: Links marked nofollow are commercial or vendor sources. Links without nofollow are editorial/authority/government/academic sources (IBM, CSIRO, Frontiers, Wikipedia, Moody’s, TechVorta). All external links open in a new window using target="_blank" with rel="noopener noreferrer" for security. Article last updated May 2025.